Message boards : News : New D3RBanditTest workunits

| Author | Message |

|---|---|

|

Dears, | |

| ID: 56504 | Rating: 0 | rate:

| |

|

For (quick/rough) reference... | |

| ID: 56505 | Rating: 0 | rate:

| |

|

It would be nice if you at least gave a longer Deadline. | |

| ID: 56507 | Rating: 0 | rate:

| |

|

Yup, my old GT730 is out, take too much time. Unless the deadline have more days I stop accepting WU. | |

| ID: 56508 | Rating: 0 | rate:

| |

|

Hi All! | |

| ID: 56509 | Rating: 0 | rate:

| |

This WU took already 7 hours and total time is estimated for 38 hours on Mobile 2070 RTX. Newest drivers, i7 9th.Yes. These workunits are very long. If so, I won't make it into the deadlineSet a low cache (0+0 days, or 0.01+0 days). You should abort them. (at least the one which didn't start yet) If you want to meet the 5 days deadline, you should let your laptop crunch for 24/7. If you don't want that, then you should set GPUGrid to "no new tasks" for a while (until this batch is over). | |

| ID: 56510 | Rating: 0 | rate:

| |

|

My GTX 780 did it in ~ 50h. | |

| ID: 56512 | Rating: 0 | rate:

| |

|

I received a new workunit on Feb 10. Deadline is today. Stats show 32% readiness after 22.5 hours of GPU use. 48 more hours of GPU time is required to finish. Not practical and not doable for me. With such unit size and deadline combination I can certainly find better use for my GPU resources somewhere else. | |

| ID: 56513 | Rating: 0 | rate:

| |

|

I completed two running concurrently same machine on two GTX1060's in about 36.4 - 36.5 hours. Running two more about 30% completed in about 10 hours so far. Not too bad for lower end cards. | |

| ID: 56514 | Rating: 0 | rate:

| |

|

Sadly the deadline on the tasks is less than the amount of time it will take to complete it. | |

| ID: 56515 | Rating: 0 | rate:

| |

|

It should take about 50 hours on my GTX 1650. | |

| ID: 56517 | Rating: 0 | rate:

| |

|

Hello, | |

| ID: 56518 | Rating: 0 | rate:

| |

|

Funnily, it seems to be much faster on this PC with the GTX 1060 than on the other PC with the GTX 1650. They have different CPUs, this one an AMD Ryzen 5 1400 and the other an Intel i5 9400F. Does this make the difference? | |

| ID: 56519 | Rating: 0 | rate:

| |

|

Sorry, after a fast start to 10% the task went back to 0.66%. It seemed too good to be true. | |

| ID: 56520 | Rating: 0 | rate:

| |

after a fast start to 10% the task went back to 0.66%. That seems to be the normal behavior for this current batch of ADRIA WUs. In BOINC Manager, when the task has reached stability at its low progress rate, if you click over the task and then in "Properties", the GTX 1650 graphics card should indicate some value around 2.160% per hour. You can do the same with the GTX 1060 to compare values. The higher the progress rate, the lower will be the completion time. | |

| ID: 56521 | Rating: 0 | rate:

| |

I completed two running concurrently same machine on two GTX1060's in about 36.4 - 36.5 hours. Running two more about 30% completed in about 10 hours so far. Not too bad for lower end cards. My GTX 1060 3GB cards take ~40 hrs. My GTX 1650s need about 45 hrs. My GTX 750ti should have finished in 100 hrs, but I found it stalled (not drawing any power, even though it showed 99% usage) and after I paused and restarted it it never got back up to 1%/hr. It needs 1 hour more than the 120 hr window provides. I'll try again with it overclocked gently. Please may we have a 144hr window of expiration so everybody gets a chance to crunch. For now there is work for older cards and AMD GPUs at FAH/COVID moonshot. https://foldingathome.org/ | |

| ID: 56522 | Rating: 0 | rate:

| |

|

The progress on GTX 1650 is 2,160%/hour ; for GTX 1060 is 2,160%/hour. This seems to agree with my observations.The 1060 has only 3 GB of video memory, which was not sufficient for same Einstein@home gravitational waves task. The 1650 has 4 GB.These tasks use only 667 MB memory. | |

| ID: 56523 | Rating: 0 | rate:

| |

For now there is work for older cards and AMD GPUs at FAH/COVID moonshot. But it is not BOINC, that is the main issue in my case | |

| ID: 56524 | Rating: 0 | rate:

| |

|

What is a "18h on a 1080 Ti". | |

| ID: 56526 | Rating: 0 | rate:

| |

|

Typical D3RBanditTest workunits will take 18 hours or 65,000 seconds to calculate and return a result on a Nvidia GTX 1080 Ti video card. | |

| ID: 56528 | Rating: 0 | rate:

| |

|

Is there a minimum driver version or CUDA version required? | |

| ID: 56529 | Rating: 0 | rate:

| |

Is there a minimum driver version or CUDA version required? yes, all the new ACEMD tasks here are CUDA 10.0 on Linux, and CUDA 10.1 on Windows. so you need the appropriate drivers for that CUDA version. Linux, CUDA 10.0 - >=410.48 Windows, CUDA 10.1 - >=418.96 ____________  | |

| ID: 56530 | Rating: 0 | rate:

| |

|

My GTX 750ti appears to have been disqualified by the server. Despite over 1K WUs waiting to be sent my log says "Scheduler request completed: Got 0 new tasks" and "No tasks sent". | |

| ID: 56531 | Rating: 0 | rate:

| |

My GTX 750ti appears to have been disqualified by the server. Despite over 1K WUs waiting to be sent my log says "Scheduler request completed: Got 0 new tasks" and "No tasks sent".It's not just your GTX 750Ti. My GTX 1080Ti/Linux didn't receive work: 2021. febr. 16., Tuesday, 00:19:34 CET | GPUGRID | checking NVIDIA GPU

2021. febr. 16., Tuesday, 00:19:34 CET | GPUGRID | [work_fetch] set_request() for NVIDIA GPU: ninst 1 nused_total 0.00 nidle_now 1.00 fetch share 1.00 req_inst 1.00 req_secs 25920.00

2021. febr. 16., Tuesday, 00:19:34 CET | GPUGRID | NVIDIA GPU set_request: 25920.000000

2021. febr. 16., Tuesday, 00:19:34 CET | GPUGRID | [work_fetch] request: CPU (0.00 sec, 0.00 inst) NVIDIA GPU (25920.00 sec, 1.00 inst)

2021. febr. 16., Tuesday, 00:19:34 CET | GPUGRID | Sending scheduler request: To fetch work.

2021. febr. 16., Tuesday, 00:19:34 CET | GPUGRID | Requesting new tasks for NVIDIA GPU

2021. febr. 16., Tuesday, 00:19:35 CET | GPUGRID | work fetch suspended by user

2021. febr. 16., Tuesday, 00:19:36 CET | GPUGRID | Scheduler request completed: got 0 new tasks

2021. febr. 16., Tuesday, 00:19:36 CET | GPUGRID | No tasks sent

2021. febr. 16., Tuesday, 00:19:36 CET | GPUGRID | No tasks are available for New version of ACEMD

2021. febr. 16., Tuesday, 00:19:36 CET | GPUGRID | Project requested delay of 31 seconds also my RTX 2080Ti/Windows didn't receive work: 2021. 02. 16. 0:23:06 | GPUGRID | checking NVIDIA GPU

2021. 02. 16. 0:23:06 | GPUGRID | [work_fetch] set_request() for NVIDIA GPU: ninst 1 nused_total 1.00 nidle_now 0.00 fetch share 1.00 req_inst 0.00 req_secs 23728.26

2021. 02. 16. 0:23:06 | GPUGRID | NVIDIA GPU set_request: 23728.255416

2021. 02. 16. 0:23:06 | GPUGRID | [work_fetch] request: CPU (0.00 sec, 0.00 inst) NVIDIA GPU (23728.26 sec, 0.00 inst)

2021. 02. 16. 0:23:06 | GPUGRID | Sending scheduler request: To fetch work.

2021. 02. 16. 0:23:06 | GPUGRID | Requesting new tasks for NVIDIA GPU

2021. 02. 16. 0:23:08 | GPUGRID | Scheduler request completed: got 0 new tasks

2021. 02. 16. 0:23:08 | GPUGRID | No tasks sent

2021. 02. 16. 0:23:08 | GPUGRID | No tasks are available for New version of ACEMD

2021. 02. 16. 0:23:08 | GPUGRID | Project requested delay of 31 seconds Something broke in the scheduler, as the tasks in progress is decreased by about 1400. | |

| ID: 56532 | Rating: 0 | rate:

| |

|

I've managed my other host to get a new task by updating manually a couple of times, but the others still didn't get one. | |

| ID: 56533 | Rating: 0 | rate:

| |

|

Thanks Zoltan, I gave up for now and switched that GPU to FAH for now. | |

| ID: 56534 | Rating: 0 | rate:

| |

|

I have a 1660 super that takes about 34-38 hours on these new units, still seeing temps in the 60s, only uses 15% of gpu in task manager tho | |

| ID: 56535 | Rating: 0 | rate:

| |

I've managed my other host to get a new task by updating manually a couple of times, but the others still didn't get one. I haven't seen my systems have any issue with getting new tasks. but I do wonder what's going on with the massive shift of tasks from out in the field to waiting to be sent. are they erroring en masse somehow? today is about 5 days since these new tasks started showing up, so perhaps that's why. thousands of tasks hitting their deadlines from systems not fast enough to process, or systems that are fast enough if they run 24/7, but aren't processing 24/7, or systems that downloaded but were shut off for the past 5 days. or some combination of all 3. ____________  | |

| ID: 56536 | Rating: 0 | rate:

| |

|

我发现我只接收到一个任务,完成后不再有任务,我是GTX1650,这正常吗 | |

| ID: 56537 | Rating: 0 | rate:

| |

|

I have a dual GPU system, GTX 980 and GTX 1080 TI, and all of these work units have failed. Drivers are current as of Decemeber. I had to roll back January update as it didn't play nice with Milkyway@Home while you folks were on Holiday. Suddenly I can't complete a work unit without error. | |

| ID: 56539 | Rating: 0 | rate:

| |

|

I'm not seeing many resends, mostly _0 and _1 original tasks. | |

| ID: 56540 | Rating: 0 | rate:

| |

|

Does the scheduler know the correct size estimate for these WUs? | |

| ID: 56544 | Rating: 0 | rate:

| |

but I do wonder what's going on with the massive shift of tasks from out in the field to waiting to be sent. are they erroring en masse somehow? today is about 5 days since these new tasks started showing up, so perhaps that's why. thousands of tasks hitting their deadlines from systems not fast enough to process, or systems that are fast enough if they run 24/7, but aren't processing 24/7, or systems that downloaded but were shut off for the past 5 days. or some combination of all 3. In fact, giving a certain time offset before overdue tasks are resent, would effectively act as extending the deadline by this offset for them to be reported by slower GPUs One example: WU #27023500 has been reported by a GTX 750 Ti at one of my hosts in 446,845.54 seconds, more than 4 hours after deadline. This task has been rewarded with 348,750.00 credits, and hasn't been resent to any other host, with "Didn't need" legend. May be the project managers are hiddenly attending this way the request of many Gpugrid users in this regard (?) | |

| ID: 56545 | Rating: 0 | rate:

| |

|

Do these work with Ampere? | |

| ID: 56546 | Rating: 0 | rate:

| |

Do these work with Ampere?No. | |

| ID: 56547 | Rating: 0 | rate:

| |

|

Ah damn ill keep waiting then :) | |

| ID: 56548 | Rating: 0 | rate:

| |

|

Re: New WU's and tuning GPU's | |

| ID: 56549 | Rating: 0 | rate:

| |

|

Re: New WU's and tuning GPU's | |

| ID: 56550 | Rating: 0 | rate:

| |

Dears, Re: New WU's and tuning GPU's Interested in OverClocking to reduce WU duration to hit target 'due date/times'? Even if your GPU is locked down you can improve by using a curve with Frequency and Voltage of your GPU auto managed. Message me if you have questions or need help. I'm crunching the new WU's with RTX2080 mobile with MSI Afterburner and MSI Kombustor linked (that auto overclocks the GPU with a good curve). I am getting 2 days and 2 hours for e20s2_e11s14p0f75-ADRIA_D3RBandit_batch1-0-1-RND2090. 1.08% per hour = 92.59 hours or 3.58 days. If you get despondent or cannot meet deadlines but want GPU points, check out Moo Wrapper. (28 minutes per WU on an RTX 2080 mobile) | |

| ID: 56551 | Rating: 0 | rate:

| |

Re: New WU's and tuning GPU'sIf your GPU is crunching a single workunit for days, it's not worth the risk of a computing error caused by overclocking. You'll receive 0 credits for a failed workunit (after many hours, even days of crunhing it's very frustrating). Therefore I do not recommend overclocking and especially overvolting a GPU, especially a mobile GPU. GPUGrid workunits are very power hungry compared to games or other projects (except for FAH). The cooling of an average GPU is made for general use, not for crunching 24/7. Laptops with mobile GPUs can't have that big coolers as discrete GPUs have in desktop PCs. If you have a GPU with decent cooling, then it's usually overclocked by the factory. In this case you don't have to overclock it more. Power dissipation is a product of two key factors: · It's in direct ratio with GPU frequency. · It's in direct ratio with GPU voltage squared. Say you raise the frequency and the voltage by 10% (it's a bit of an exaggeration, as you can't raise the GPU voltage by 10%). In this case the power dissipation of your GPU is raised by 33.1% (1.1 by the frequency, and 1.1*1.1=1.21 by the voltage, 1.1*1.21=1.331). Luckily you can't raise your GPU's power consumption above it's limits set by the factory. You can check these limits from an administrative command prompt by nvidia-smi -q -d power Raising the GPU's power dissipation raises its temperature, as its cooling stays the same, while it should be better to achieve the same temperatures (and life expentancy). Usually you improve the cooling of your GPU only by raising the RPM of it's fans, which could be very annoying (especially if it's a laptop), it also reduces the lifespan of the fans. | |

| ID: 56553 | Rating: 0 | rate:

| |

I'm not seeing many resends, mostly _0 and _1 original tasks. There’s no quorum required here. So _1 is a resend. ____________  | |

| ID: 56554 | Rating: 0 | rate:

| |

|

WU: | |

| ID: 56557 | Rating: 0 | rate:

| |

|

I agree. Overclocking GPU is much more likely to cause WU failure. | |

| ID: 56558 | Rating: 0 | rate:

| |

|

Task 27021821 was canceled by server. Why? It was waiting to run. | |

| ID: 56560 | Rating: 0 | rate:

| |

Task 27021821 was canceled by server. Why? It was waiting to run. http://www.gpugrid.net/workunit.php?wuid=27021821 because it was no longer needed. the original host returned the task after the deadline, but before you had processed it. since a quorum is not required, they only need one result. allowing you to process something which already has a result is just a waste of time. ____________  | |

| ID: 56561 | Rating: 0 | rate:

| |

I have a 1660 super that takes about 34-38 hours on these new units, still seeing temps in the 60s, only uses 15% of gpu in task manager tho Interesting. My 1660 Ti is completing these tasks in about 25 hours. I would not have thought that there would be that much of a lead in crunching time. You have a 2600 as well, I'm running a 2200G, so my theory of a slower CPU doesn't seem to hold here. EDIT: Oh hey, this is my first post here. Hi everyone! | |

| ID: 56562 | Rating: 0 | rate:

| |

|

the 1660ti is still faster than a 1660S. you're both on Windows so your times should be a little more comparable to my 1660S Linux times (27hrs). | |

| ID: 56564 | Rating: 0 | rate:

| |

|

Is it overclocking if you're underpowering??? sudo nvidia-xconfig --enable-all-gpus --cool-bits=28 --allow-empty-initial-configuration Then execute this script:#!/bin/bash /usr/bin/nvidia-smi -pm 1 /usr/bin/nvidia-smi -acp UNRESTRICTED /usr/bin/nvidia-smi -i 0 -pl 160 /usr/bin/nvidia-settings -a "[gpu:0]/GPUPowerMizerMode=1" #0=Adaptive, 1=Prefer Maximum Performance , 2=Auto /usr/bin/nvidia-settings -a "[gpu:0]/GPUMemoryTransferRateOffset[3]=400" -a "[gpu:0]/GPUGraphicsClockOffset[3]=100" | |

| ID: 56566 | Rating: 0 | rate:

| |

|

160W seems too low for a 2080ti IMO. you're really restricting performance at that point. | |

| ID: 56568 | Rating: 0 | rate:

| |

One example: WU #27023500 has been reported by a GTX 750 Ti at one of my hosts in 446,845.54 seconds, more than 4 hours after deadline. This is an interesting opportunity to study how the server handles tardy but completed WUs. My GTX 750ti was also allowed to run past the deadline and received equal credit to yours. https://www.gpugrid.net/workunit.php?wuid=27023657 Could it be that the server can detect a WU being actively crunched and stop cancellation? Or is this a normal delay function we see? It had already created another task but had not sent it yet, and marked it as "didn't need" when my host reported. Run time was 408,214.47 sec. CPU time was 406,250.30 sec GPU clock was 1366MHz Mem clock was 2833MHz With my homespun fan intake mod it ran at 55C with 22C ambient room temp. Just can't kill it. | |

| ID: 56569 | Rating: 0 | rate:

| |

160W seems too low for a 2080tiMy circuit breakers are perfectly optimized. Not to mention heat management. I'm going to have to sit this race out. | |

| ID: 56570 | Rating: 0 | rate:

| |

One example: WU #27023500 has been reported by a GTX 750 Ti at one of my hosts in 446,845.54 seconds, more than 4 hours after deadline. I think it's probably just first come first serve. so whoever returns it first "wins" and the second other WU gets the axe. another user reported that his task was cancelled off of his host (but he hadn't started crunching yet). Unsure what would happen to a task that was axed while crunching had already started. ____________  | |

| ID: 56571 | Rating: 0 | rate:

| |

160W seems too low for a 2080tiMy circuit breakers are perfectly optimized. Not to mention heat management. I'm going to have to sit this race out. I understand. I actually have my 6x 2080ti host split between 2 breakers to avoid overloading a single 20A one (there are other systems on one of the circuits). I'm just saying that if you're going to restrict it that far, you might be better off with a cheaper card to begin with and save some money. ____________  | |

| ID: 56572 | Rating: 0 | rate:

| |

Thanks Zoltan, I gave up for now and switched that GPU to FAH for now. I have received another WU for the GTX 750ti. The send glitch has been remediated. I'm the 5th user to receive this WU iteration. https://www.gpugrid.net/workunit.php?wuid=27024256 It is a batch_1 (the 2nd batch) and the 0_1 refers to it being number 0 of 1, hence it's the only iteration that will exist of this WU. [edit] It just dawned on me that these WUs might be an experiment in having the same host perform all the generations of the model simulation consecutively instead of on different hosts. Or am I way off? | |

| ID: 56575 | Rating: 0 | rate:

| |

I'm not seeing many resends, mostly _0 and _1 original tasks. Uhh, Duh . . forgot where I'm at. | |

| ID: 56576 | Rating: 0 | rate:

| |

another user reported that his task was cancelled off of his host (but he hadn't started crunching yet). Unsure what would happen to a task that was axed while crunching had already started. When a resent task is started to process and then reported by the overdue host, this resent task will be let run to the end, and three scenes may produce: - 1) Deadline for the resent task is reached at this second host. Then, even if it is completed afterwards, it won't receive any credits, because there is already a previous valid result for it. It will be labeled by server as "Completed, too late to validate". - 2) What I call a "credit paradox" will happen when this resent task is finished in time for full bonus or mid bonus at the second host. It will receive anyway the standard credit amount without any bonus, to match the same credit amount that has already received the overdue task. - 3) When the resent task is finished past 48 hours but before its deadline, it also will receive the standard (no bonus) credit amount. | |

| ID: 56579 | Rating: 0 | rate:

| |

better off with a cheaper card to begin with and save some money. I guess you haven't been shopping for a GPU lately. Prices are really high. Perfect time to sell cards not buy them. Best thing I did was switch computers over to 240 V 20 A circuits. The most frequent problem I used to have was the A-phase getting out of balance with the B-phase and tripping one leg of the main breaker. With 240 V both phases are exactly balanced and PSUs run 6-8% more efficiently. Now if I could just find a diagnostic tool to tell me when a PSU is on its last leg. E.g, this Inline PSU Tester for $590 is pricey but looks like it's the most comprehensive I've found: https://www.passmark.com/products/inline-psu-tester/index.php If anyone knows of other brands please share. | |

| ID: 56581 | Rating: 0 | rate:

| |

better off with a cheaper card to begin with and save some money. I'm aware of the situation. but if you sell a 2080ti, and re-buy a 2070. you're still left with more money, no? even at higher prices, everything just shifts up because of the market. You're restricting the 2080ti so much that it performs similarly to a 2070S, so why have the 2080ti? that was my only point. I agree about 240V, and I normally run my systems in a remote location on 240V, but due to renovations, I have one system temporarily at my house. but if you're on 240V, why restrict it so much? I use the voltage telemetry (via IPMI) to identify when a PSU might be failing. ____________  | |

| ID: 56583 | Rating: 0 | rate:

| |

|

Are there prolonged CPU-heavy periods where GPU util drops nearly to zero? Otherwise, I'll probably have an issue with my card. Just ~2 hrs into a new WU and it stalled. BOINC manager reports steadily increasing processor time since last checkpoints but, GPU util has been at 0% for nearly 30 min. Is that normal? | |

| ID: 56587 | Rating: 0 | rate:

| |

Are there prolonged CPU-heavy periods where GPU util drops nearly to zero? Otherwise, I'll probably have an issue with my card. Just ~2 hrs into a new WU and it stalled. BOINC manager reports steadily increasing processor time since last checkpoints but, GPU util has been at 0% for nearly 30 min. Is that normal? not normal from my experience. mine stay pegged at 98% GPU utilization for the entire run. ____________  | |

| ID: 56589 | Rating: 0 | rate:

| |

Are there prolonged CPU-heavy periods where GPU util drops nearly to zero? Otherwise, I'll probably have an issue with my card. Just ~2 hrs into a new WU and it stalled. BOINC manager reports steadily increasing processor time since last checkpoints but, GPU util has been at 0% for nearly 30 min. Is that normal? No that's not normal. I haven't seen that behavior myself. Are you using an app_config.xml to say run 2 WUs on the same GPU or max out the CPUs? This is mine: <app_config> Might be something else you're running.<app> <name>acemd3</name> <gpu_versions> <cpu_usage>1.00</cpu_usage> <gpu_usage>1.00</gpu_usage> </gpu_versions> </app> <project_max_concurrent>4</project_max_concurrent> </app_config> | |

| ID: 56591 | Rating: 0 | rate:

| |

I'm aware of the situation. but if you sell a 2080ti, and re-buy a 2070. you're still left with more money, no? even at higher prices, everything just shifts up because of the market. You're restricting the 2080ti so much that it performs similarly to a 2070S, so why have the 2080ti? that was my only point.I'm selling off my second string (1070s & 1080s) but keeping my 2080s. Waiting for RTC 3080s with the new design rules but that'll probably be April. I agree about 240V, and I normally run my systems in a remote location on 240V, but due to renovations, I have one system temporarily at my house. but if you're on 240V, why restrict it so much?This is funny since you gave me the script in one of the GG threads and I've been grateful since it cut my electric bill by a third. My 100 Amp load center is maxxed out. No wiggle room left. I use the voltage telemetry (via IPMI) to identify when a PSU might be failing.Sounds good but Dr Google threw so much stuff at me. Voltage telemetry can be so many different things. The IPMI article seemed to indicate that AMT might be better for me: https://en.wikipedia.org/wiki/Intel_Active_Management_Technology. Most of my PSUs have been running for about 7 years now and starting to die of old age. Hard failures are nice since they're easy to diagnose. It's the flaky ones with intermittent problems. I swap parts between a good computer and a bad actor and sometimes I get lucky and I can convince myself that a PSU is over the hill. I have one now that after a couple of hours randomly stops communicating while remaining powered up. I need to put a head on it and play swapsies. Does the voltage telemetry you observe give you an unambiguous indication of the demise of a PSU??? | |

| ID: 56593 | Rating: 0 | rate:

| |

|

IPMI stands for Intelligent Platform Management Interface. | |

| ID: 56594 | Rating: 0 | rate:

| |

|

As Keith said, IPMI is the remote management interface built into the ASRock Rack and Supermicro motherboards that I use. They have voltage monitoring built in for the most part. It just measures the voltages that it sees at the 24-pin and reports it out over the IPMI interface. I access the telemetry via the dedicated webGUI that is provided at the configurable IP address on the LAN. | |

| ID: 56595 | Rating: 0 | rate:

| |

|

“RTC 3080s with new design rules” ? Huh? | |

| ID: 56596 | Rating: 0 | rate:

| |

|

Seems I did not get one yet, and I have a Geforce RTX 2060 | |

| ID: 56599 | Rating: 0 | rate:

| |

Seems I did not get one yet, and I have a Geforce RTX 2060According to the status page of your host, you have two, and you had 7 before. | |

| ID: 56600 | Rating: 0 | rate:

| |

|

Ok, maybe I was looking for a different name than what is there, so what are the names of the two new ones then? | |

| ID: 56602 | Rating: 0 | rate:

| |

|

GTX 1060 completed in 157,027.38 s | |

| ID: 56603 | Rating: 0 | rate:

| |

Click on the link in my reply, and you'll see.Ok, maybe I was looking for a different name than what is there, so what are the names of the two new ones then?Seems I did not get one yet, and I have a Geforce RTX 2060According to the status page of your host, you have two, and you had 7 before. | |

| ID: 56605 | Rating: 0 | rate:

| |

“RTC 3080s with new design rules” ? Huh? They're switching some or all from Samsung 8 nm design rules to TSMC 7 nm design rules. Also an RTX 3080 Ti with more memory is expected. https://hexus.net/tech/news/graphics/146170-nvidia-shift-rtx-30-gpus-tsmc-7nm-2021-says-report/ | |

| ID: 56606 | Rating: 0 | rate:

| |

“RTC 3080s with new design rules” ? Huh? oh, it was a typo on the RTX/RTC. They've had those rumors since pretty much launch (note the date on that article lol). Personally I wouldn't hold my breath. If they release anything, expect more paper launches with a few thousand cards available day one, then basically nothing for months again. but its all moot for GPUGRID anyway until they get around to making the app compatible with Ampere. there are 5 different ampere models that i've seen attemtped to be used here (A100, 3090, 3080, 3070, 3060ti), and the 3060 is set for launch late February. more models are meaningless for us if we can't use them :( ____________  | |

| ID: 56607 | Rating: 0 | rate:

| |

its all moot for GPUGRID anyway until they get around to making the app compatible with Ampere. I bet they don't have an Ampere GPU to do development and testing on. | |

| ID: 56608 | Rating: 0 | rate:

| |

|

The thing is. They don’t even need to. They can just download the new CUDA toolkit, edit a few arguments in the config file or make file and re-compile the app as-is. It’s not a whole lot of work. And it’ll work. They basically just need to unlock the new architecture. The app now doesn’t even try to run, it fails at the architecture check. | |

| ID: 56609 | Rating: 0 | rate:

| |

I have a dual GPU system, GTX 980 and GTX 1080 TI, and all of these work units have failed. Drivers are current as of Decemeber. I had to roll back January update as it didn't play nice with Milkyway@Home while you folks were on Holiday. Suddenly I can't complete a work unit without error. Running for how many hours a day? The GTX 1080 Ti should be adequate if you run it 24 hours a day, but I'm not sure if the GTX 980 will be. | |

| ID: 56611 | Rating: 0 | rate:

| |

|

I'm talking about the new test units that were being talked about by ADMIN, not WUs from ACEMD, and if they have similar names than I wouldn't know. But they are most certainly not taking 18 hours to crunch, they are taking the same amount of time to crunch so I think they are the same WUs that I have been getting for six months, not the new ones listed at the top of this thread. | |

| ID: 56612 | Rating: 0 | rate:

| |

I'm talking about the new test units that were being talked about by ADMIN,These workunits are the same as the "test" batch. not WUs from ACEMD,ACEMD is tha app that process all of the GPUGrid workunits, regardless of their size. and if they have similar names than I wouldn't know.They are named like: e22s16_e2s343p0f9-ADRIA_D3RBandit_batch2-0-1-RND4443_1 But they are most certainly not taking 18 hours to crunch,They take about 72,800~74,000 seconds on your host with an RTX 2060. That is 20h 13m ~ 20h 33m. they are taking the same amount of time to crunch so I think they are the same WUs that I have been getting for six months, not the new ones listed at the top of this thread.They are definitely not the same, as those took less than 2 hours on a similar GPU. | |

| ID: 56613 | Rating: 0 | rate:

| |

I have a dual GPU system, GTX 980 and GTX 1080 TI, and all of these work units have failed. Drivers are current as of Decemeber. I had to roll back January update as it didn't play nice with Milkyway@Home while you folks were on Holiday. Suddenly I can't complete a work unit without error. they have not all failed. you actually have a few that were submitted fine. see your tasks for that system here: http://www.gpugrid.net/results.php?hostid=514949 of your errors: 00:31:16 (11412): wrapper: running acemd3.exe (--boinc input --device 0) 00:28:21 (11224): wrapper: running acemd3.exe (--boinc input --device 0) 00:28:21 (3488): wrapper: running acemd3.exe (--boinc input --device 1) 23:35:51 (3068): wrapper: running acemd3.exe (--boinc input --device 0) 23:39:12 (1400): wrapper: running acemd3.exe (--boinc input --device 1) 23:39:12 (4088): wrapper: running acemd3.exe (--boinc input --device 0) so it's clear what's causing your issue. you're either routinely starting and stopping BOINC computation or you have some task switching with other projects going on due to the long run of these tasks, which sometimes results in the process restarting on a different card. it's fairly well known that this will result in an error for GPUGRID tasks. you should increase the time threshold for task switching to longer than the run of these tasks (24 hrs?), and avoid interrupting the computation. Do not turn off the computer or let it go to sleep. I would probably even avoid the use of the "suspend GPU while computer is in use" option in BOINC. anything to avoid interrupting these very long tasks. ____________  | |

| ID: 56614 | Rating: 0 | rate:

| |

you should increase the time threshold for task switching to longer than the run of these tasks (24 hrs?), and avoid interrupting the computation. Try setting Resource=Zero on the other program you wish to time-slice with. Then it should only send you its WU if you have no GG WUs left. | |

| ID: 56615 | Rating: 0 | rate:

| |

|

true, I do this. | |

| ID: 56616 | Rating: 0 | rate:

| |

I have a 1660 super that takes about 34-38 hours on these new units, still seeing temps in the 60s, only uses 15% of gpu in task manager thoClick on the GPU graph on the left pane, then on the right pane select "Cuda" instead of "3D" on the top left subgraph. | |

| ID: 56617 | Rating: 0 | rate:

| |

It just dawned on me that these WUs might be an experiment in having the same host perform all the generations of the model simulation consecutively instead of on different hosts. Or am I way off?You are right, this bacth consists of threads having only one single, but very long generation, making a single workunit the whole thread of the batch. It's not an experiment, as this is not the first time to issue such a batch in GPUGrid's history. In this way the progress of a batch is much faster, as the project doesn't have to wait for many*5 days for a thread to finish. As the workunits get assigned randomly to hosts, it's sure that some of the generations of a multiple generation long simulation will be assigned to slow or unreliable hosts, this will add significant latency for completing a single thread of a simulation. | |

| ID: 56620 | Rating: 0 | rate:

| |

|

Sorry, I took the last cookie... ;-) | |

| ID: 56621 | Rating: 0 | rate:

| |

Sorry, I took the last cookie... ;-) don't worry, in 4-5 days there will be plenty more resends available from all the hit-n-runs and systems that are too slow. ____________  | |

| ID: 56622 | Rating: 0 | rate:

| |

|

These tasks are too big for my 1050ti | |

| ID: 56626 | Rating: 0 | rate:

| |

in 4-5 days there will be plenty more resends available from all the hit-n-runs and systems that are too slow. but this cannot be the purpose of the exercise, or can it? | |

| ID: 56628 | Rating: 0 | rate:

| |

|

looks like some new tasks are being loaded up. I've received several _0s | |

| ID: 56634 | Rating: 0 | rate:

| |

|

I've got some of these new batch0 tasks too. | |

| ID: 56635 | Rating: 0 | rate:

| |

|

I have CUDA: NVIDIA GPU 0: GeForce GTX 1650 (driver version 461.40, CUDA version 11.2, compute capability 7.5, 4096MB, 3327MB available, 2849 GFLOPS peak) | |

| ID: 56636 | Rating: 0 | rate:

| |

|

Hours? | |

| ID: 56637 | Rating: 0 | rate:

| |

Hours? about 172,897.00 seconds http://www.gpugrid.net/results.php?hostid=526190 | |

| ID: 56638 | Rating: 0 | rate:

| |

|

RTX3090 just repeats Computation error. | |

| ID: 56639 | Rating: 0 | rate:

| |

RTX3090 just repeats Computation error.As yet, the RTX 3xxx series is not supported by GPUGrid. If you own such a card, please set "No new tasks" for GPUGrid until further notice. | |

| ID: 56640 | Rating: 0 | rate:

| |

I have CUDA: NVIDIA GPU 0: GeForce GTX 1650 (driver version 461.40, CUDA version 11.2, compute capability 7.5, 4096MB, 3327MB available, 2849 GFLOPS peak) This is normal for that GPU. These tasks are very long and that GPU is pretty weak. ____________  | |

| ID: 56641 | Rating: 0 | rate:

| |

|

Seeking some help if possible. I have had only one of the new work units download to my computer on 15 Feb. Unfortunately, it was interrupted by a power loss in my area, and when power came back on I discovered the Nvidia driver had somehow been corrupted. After the fix, the WU ended up with a compute error. The problem is that since then I have not received any more WUs, either automatically or during mulitple Updates in BOINC. Can someone look at my computer and see what I may have set wrong (driver etc) that may be preventing me getting work units? I have checked what my limited knowledge allows. Thank you for any help. | |

| ID: 56642 | Rating: 0 | rate:

| |

|

It appears that the drivers are not installed correctly. No driver is reported by BOINC. | |

| ID: 56643 | Rating: 0 | rate:

| |

|

Thank you! It worked. Odd, since it was working on Einstein. Just for my ongoing learning, where did you see that no driver was being reported to GPUGRID? Again, thank you for your help. | |

| ID: 56644 | Rating: 0 | rate:

| |

|

If you look at your host details here: http://www.gpugrid.net/hosts_user.php?userid=524258 | |

| ID: 56645 | Rating: 0 | rate:

| |

|

My ole Nvidia 1080 is turning new WU's around in about 25 hours. | |

| ID: 56646 | Rating: 0 | rate:

| |

So I'm stuck with F@H for the new sports car. You might not consider yourself as having been 'stuck' if your host tests the candidate that turns out to be the magic bullet for curing COVID-19. You can also brag that you are a part of the world's largest supercomputer when you crunch for Greg. 2.8 million "donors" and rising. If you're a "points person" you'll be gratified by the very generous credit they award there, even though it's not BOINC applicable. Too bad Bowman Lab and FAH left the Berkley format IMO (I dislike all things proprietary), but the COVID moonshot is too important not to participate in, as a cruncher who is focused on promoting science which is urgently relevant. I just remind myself that this is not all about me. Together we crunch To check out a hunch, But wish that our credit Would just buy our lunch. (traditional) | |

| ID: 56647 | Rating: 0 | rate:

| |

|

The WorldCommunityGrid has started releasing some GPU tasks of OpenPandemicsbeta but I haven't been lucky to get them. | |

| ID: 56648 | Rating: 0 | rate:

| |

The WorldCommunityGrid has started releasing some GPU tasks of OpenPandemicsbeta but I haven't been lucky to get them. Luckily I got some of them. It looks like WCG is looking for suitable size of WU. Excitedly, they seem to be much faster than the CPU version. | |

| ID: 56656 | Rating: 0 | rate:

| |

|

Hey Toni! | |

| ID: 56658 | Rating: 0 | rate:

| |

|

I don't think you should think of it as a "penalty" you're not getting penalized, just not getting the quick return bonus since you didn't make the cutoff. | |

| ID: 56659 | Rating: 0 | rate:

| |

|

I have quite a high ERROR rate on this host: http://www.gpugrid.net/results.php?hostid=523675 | |

| ID: 56664 | Rating: 0 | rate:

| |

|

I did not see a GTX960 mentioned here. BOINC thinks the new WU will take 3 hours to complete. Is that accurate? | |

| ID: 56665 | Rating: 0 | rate:

| |

I did not see a GTX960 mentioned here. BOINC thinks the new WU will take 3 hours to complete. Is that accurate? Not yet. After the first one has completed, subsequent estimates will bemore realistic. With the current tasks, I'd guess something in the range 1.5 days - 2 days. I ditched my 970s last year, because I could see the writing on the wall - after a good few years of faithful service, they were no longer fit to match the current beasts. I went for 1660 (super or Ti) instead. This project tends to run different sub-projects, working with data and parameters from different researchers. And they don't reset the task estimates when they change the jobs. Your card would have been very comfortable with the previous run, but not so happy with this one. Only time will tell what the next one will be - we tend not to find out until after it's started. | |

| ID: 56666 | Rating: 0 | rate:

| |

I have quite a high ERROR rate on this host: http://www.gpugrid.net/results.php?hostid=523675 “Particle coordinate is nan” is usually too much overclocking. Or card too hot causing instability. Remove any overclock and ensure the card has good airflow for reasonable temps. The other errors might be driver related. Try to remove the old drivers with DDU from safe mode, be sure to select the option in the settings to prevent Windows from automatically installing drivers. Then go to nvidia’s website and download the latest drivers for your system, selecting custom install and clean install during the install process. That would be my next steps. ____________  | |

| ID: 56668 | Rating: 0 | rate:

| |

... download the latest drivers for your system ... I'm always a bit cautious about going for 'the latest' of anything. Driver release is usually driven by gaming first, and sometimes they break other things - like computing for science - along the way. I usually go for the final, bugfix, sub-version of the previous major release. | |

| ID: 56669 | Rating: 0 | rate:

| |

... download the latest drivers for your system ... whatever suits your fancy. The important bit is to totally wipe the old drivers, and do not allow Microsoft to auto-install their own, and do a clean install of the package provided by Nvidia. (I prefer to avoid Geforce Experience as well, but up to you I guess). ____________  | |

| ID: 56670 | Rating: 0 | rate:

| |

The other errors might be driver related. Try to remove the old drivers with DDU from safe mode, be sure to select the option in the settings to prevent Windows from automatically installing drivers. Then go to nvidia’s website and download the latest drivers for your system, selecting custom install and clean install during the install process. It is a Linux Box... I switched already back to Nouveau driver. Restarted - no image… luckily I was able to restart with GRUB (recovery mode). After that I was able to install latest Nvidia Driver again. Hope this will solve the problem! | |

| ID: 56671 | Rating: 0 | rate:

| |

The other errors might be driver related. Try to remove the old drivers with DDU from safe mode, be sure to select the option in the settings to prevent Windows from automatically installing drivers. Then go to nvidia’s website and download the latest drivers for your system, selecting custom install and clean install during the install process. apologies, i must have read your previous post too quickly and thought you said it was a windows system. I'd still try to reinstall the drivers, and do a full uninstall/purge/reinstall. also, you should make sure the system isnt going to sleep or hibernation or anything like that. if you can make sure the computation isnt interrupted that seems to run the best in my experience. ____________  | |

| ID: 56674 | Rating: 0 | rate:

| |

|

As of the date of the post, all work units had failed. I didn't complete one successfully until the 17th.

9 Error while computing 3 Completed and validated 1 Cancelled by server

| |

| ID: 56676 | Rating: 0 | rate:

| |

|

Is there no way to make the work units smaller so those of us that have older systems can still participate in the project? | |

| ID: 56677 | Rating: 0 | rate:

| |

As of the date of the post, all work units had failed. I didn't complete one successfully until the 17th. from what I can infer from other posts, this project has never had, nor promised, a homogeneous supply of tasks. it seems like the relatively small MDADs that we had for several months was the exception. as to your errors, on your single GTX 660 host. you were given two tasks. but that GPU is too slow to complete a single task in the 5-day limit, let alone two. it looks like you started one, and the other sat waiting until it hit the deadline, at which point it was canceled for not even started yet. this is standard BOINC behavior. your other task, appears to still be in-progress on your system even though it's past the deadline. you may as well just cancel that unit, since it was already sent out to another system, and received a valid result 4 days ago. even if you continue crunching it and submit it, it's unlikely that you will receive any credit for it. I would just cancel it and set NNT for GPUGRID on that system until suitable WUs are available here again. http://www.gpugrid.net/workunit.php?wuid=27025213 as to your other system with 2 GPUs. almost of all of the errors are for the same reason: ERROR: src\mdsim\context.cpp line 318: Cannot use a restart file on a different device! this is a known problem with the app here. if a task is interrupted, and tries to restart on a different device, you are likely to get this error. the only real solution is to not interrupt the task. which means not stopping computation and not turning the system off. I understand that not everyone wants to operate this way, but there are also several other projects to choose from that will allow you to operate this way. Folding@home seems to be a popular choice for folks around here for older nvidia cards, or for people who wish to contribute less often/less resources. ____________  | |

| ID: 56678 | Rating: 0 | rate:

| |

Is there no way to make the work units smaller so those of us that have older systems can still participate in the project? no way to make the WUs smaller. we get what the project gives us. you cannot manipulate those tasks client-side at all. ____________  | |

| ID: 56679 | Rating: 0 | rate:

| |

it seems like the relatively small MDADs that we had for several months was the exception. yes and no. until some time ago, there were so-called "short runs" and "long runs" (you can still see this when looking at the lower left section in the server status page), and the user could choose in his/her settings. The small MDADs we recently got would definitely have fallen under "short runs". But never before there were such long runs like the current series, not even under "long runs". So, as I said before, for users with older cards it would help if the 5-days-deadline would be extended by 1 or 2 days. No idea why this is not being done :-( | |

| ID: 56682 | Rating: 0 | rate:

| |

|

short runs seem to be defined as 2-3 hrs on the fastest card. the MDADs were way shorter than that. running only about 15-20mins on a 2080ti. I'd say that's out of the norm for the project history. | |

| ID: 56683 | Rating: 0 | rate:

| |

short runs seem to be defined as 2-3 hrs on the fastest card. ... these definitions on the server status page: Short runs (2-3 hours on fastest card) Long runs (8-12 hours on fastest card) have been like this over many years. It's never been changed, as far as I can remember. | |

| ID: 56684 | Rating: 0 | rate:

| |

It's never been changed, as far as I can remember. But "the fastest card", being a relative term, has certainly changed its meaning over the years. | |

| ID: 56685 | Rating: 0 | rate:

| |

It's never been changed, as far as I can remember. This relative term is used intentionally for practital reasons: the staff don't have to change this definition at the release of every new GPU generation, instead they can release longer workunits. | |

| ID: 56690 | Rating: 0 | rate:

| |

|

Will there be any new work or is the batch over now? | |

| ID: 56695 | Rating: 0 | rate:

| |

Will there be any new work or is the batch over now? maybe just resends at the moment. ____________  | |

| ID: 56697 | Rating: 0 | rate:

| |

|

"OpenPandemics for GPU | |

| ID: 56714 | Rating: 0 | rate:

| |

|

There are beta tasks already released, but they are few and I haven't received any. They last only a few minutes. | |

| ID: 56715 | Rating: 0 | rate:

| |

Greetings everyone, They're getting closer! | |

| ID: 56716 | Rating: 0 | rate:

| |

|

They must be having a hard time getting them long enough. | |

| ID: 56717 | Rating: 0 | rate:

| |

They must be having a hard time getting them long enough. Really length isn’t important as long as the project can compile the results. SETI had work units that only ran for less than a minute on fast GPUs. But longer tasks ideally would be more time efficient, less wasted time between tasks. ____________  | |

| ID: 56718 | Rating: 0 | rate:

| |

|

I guess, it would be a perfect place then to shift the slower cards to if they don't finish tasks in time here. My 750 Ti and 970 will do work over there as soon as they ship the production ready version of the app. | |

| ID: 56719 | Rating: 0 | rate:

| |

|

I have an odd question regarding these work units. Was the credit awarded dependent upon how quickly they were completed? They all had the same application identifier as far as I could tell, and they all took about the same amount of processor time to complete. They seemed to award 3 different amounts of credit, either approximately 348,000 points, 435,000 or 520,000. I completed about a dozen and the longer I took the less credit given. The ones I returned in less than 2 days all awarded 435,000 points, those that took 3 days or longer all awarded 348,000. Was this an actual factor or just a wild coincidence? | |

| ID: 56720 | Rating: 0 | rate:

| |

|

Yes. Tasks returned in under 24hrs get a 50% bonus. Under 48hrs get a 25% bonus. And tasks between 2-5 days get normal base credit. | |

| ID: 56721 | Rating: 0 | rate:

| |

|

Can we know what exactly we are crunching? | |

| ID: 56722 | Rating: 0 | rate:

| |

|

Normally we don't know any specifics until a paper is generated and cites the workunits that were used for the investigation. | |

| ID: 56725 | Rating: 0 | rate:

| |

|

Ah, thank you. I wish I had known! It took my 1070 GPU's about 27 hours to complete them but I kept most of my resources on other projects and only made sure to return them before they timed out. I could have picked up a few hundred thousand more points and got my 100 million molecule. Oh well... | |

| ID: 56727 | Rating: 0 | rate:

| |

|

as of this morning, on one of my machines I had still 2 tasks: one was running, the other one waiting. An hour later I noticed that the latter one was "aborted by server". | |

| ID: 56730 | Rating: 0 | rate:

| |

as of this morning, on one of my machines I had still 2 tasks: one was running, the other one waiting. An hour later I noticed that the latter one was "aborted by server". That task was a resent, the server cancelled it because the host originally assigned was finally able to finish and deliver it back. The server checked first it was not been being crunched in your host. | |

| ID: 56731 | Rating: 0 | rate:

| |

as of this morning, on one of my machines I had still 2 tasks: one was running, the other one waiting. An hour later I noticed that the latter one was "aborted by server". so if the task was still being crunched on the other host (and finally got finished there) - why was it then ever sent to me, too? | |

| ID: 56732 | Rating: 0 | rate:

| |

as of this morning, on one of my machines I had still 2 tasks: one was running, the other one waiting. An hour later I noticed that the latter one was "aborted by server". Because it was already over 5 days since that host downloaded the unit, so beyond the deadline for sending a new instance of the wu. It is the standard BOINC way of working (each project sets its own deadlines). | |

| ID: 56733 | Rating: 0 | rate:

| |

Because it was already over 5 days since that host downloaded the unit, so beyond the deadline for sending a new instance of the wu. oh, okay, I was not aware of that. The interesting thing is: I received it 2 days ago. So if the original host finished it this morning, the task must have been 7 days "old" then (and obviously got credit). Recently, one of my slower hosts finshed a task after 5 days plus a few hours, and it was not accepted any more. No credits: "too late". How does this fit together? | |

| ID: 56734 | Rating: 0 | rate:

| |

Because it was already over 5 days since that host downloaded the unit, so beyond the deadline for sending a new instance of the wu. What I think could have accourred is that the server issued a new wu once yours were over 5 days and that the new host crunched and delivered the result before you finished yours. In that situation you should normally receive no credit. I can't find that wu in your hosts, if you can point to it I will have a look. | |

| ID: 56735 | Rating: 0 | rate:

| |

Because it was already over 5 days since that host downloaded the unit, so beyond the deadline for sending a new instance of the wu. I told you this before what happened in that case. There’s some grace period where if you return a result that has already been received by another host, you’ll still get credit. I’m guessing it’s about 1 day. Maybe less. In that case you returned it 4 days after the first result. So you missed the validate period. If the person returned it in 7 days, but they were the first to return it, they get credit. Doesn’t matter if it’s late, if you’re first you will get credit. It’s a good thing that the project cancelled that WU from your host to prevent unnecessary and wasted computation. You would have spent another 5 days crunching something that they already have a result for, then you would have not received credit, and been upset about that. ____________  | |

| ID: 56736 | Rating: 0 | rate:

| |

I can't find that wu in your hosts, if you can point to it I will have a look. here it is: https://www.gpugrid.net/result.php?resultid=32550373 | |

| ID: 56741 | Rating: 0 | rate:

| |

... There’s some grace period where if you return a result that has already been received by another host, you’ll still get credit. I’m guessing it’s about 1 day. Maybe less. so how long is the grace period? about 1 day? or less? Or any time longer? Or are there different types of grace periods? This system is somewhat obscure, anyway. | |

| ID: 56742 | Rating: 0 | rate:

| |

|

A true, formal, grace period would result in the deadline shown on the website being a day or few later than the deadline shown on your computer at home. The BOINC client will try to finish the job by the deadline shown locally, but provided its returned by the website deadline, nothing is lost. But we don't use that here. | |

| ID: 56743 | Rating: 0 | rate:

| |

|

it's certainly informal. I don't know how long the grace period is, I'm just using my own experience to make an educated guess about the ~1 day length. but it's certainly shorter than the 4 days from Erich's previous situation since he got a validate error when he returned it. 32544714 483418 21 Feb 2021 | 21:59:03 UTC 22 Feb 2021 | 23:19:24 UTC Error while computing 64,567.96 64,163.00 --- New version of ACEMD v2.11 (cuda101) 32547573 564623 23 Feb 2021 | 1:58:35 UTC 28 Feb 2021 | 3:37:59 UTC Completed and validated 170,762.44 108,992.30 348,750.00 New version of ACEMD v2.11 (cuda101) 32550287 543446 28 Feb 2021 | 1:58:40 UTC 28 Feb 2021 | 18:52:42 UTC Completed and validated 60,467.52 60,460.20 348,750.00 New version of ACEMD v2.11 (cuda100) host before me blew their deadline it was sent to me for crunching (i started it nearly right away due to small cache on this host) host before me returned their result 2hrs after deadline, got base credit i crunched it for 17hrs, returned it 15hrs after previous host, also got base credit. ____________  | |

| ID: 56745 | Rating: 0 | rate:

| |

|

i can`t get any WU! why@@? | |

| ID: 56747 | Rating: 0 | rate:

| |

i can`t get any WU! why@@? none available right now. ____________  | |

| ID: 56748 | Rating: 0 | rate:

| |

i know i've returned a result that was 12+hrs past the return of the previous person (who blew their 5-day deadline, but returned it a few hours after it was sent to me). This agrees my own experience. Your case hits scene number 2 at this previous post. it's certainly informal. I don't know how long the grace period is, I'm just using my own experience to make an educated guess about the ~1 day length. but it's certainly shorter than the 4 days from Erich's previous situation since he got a validate error when he returned it. I think that there isn't a fixed grace period. The only criterion is getting a valid result for each workunit. And chance for these credit inconsistencies increases when (like now) the work units available are only rensends. | |

| ID: 56751 | Rating: 0 | rate:

| |

And chance for these credit inconsistencies increases when (like now) the work units available are only rensends. this statement seems perfectly correct :-) | |

| ID: 56752 | Rating: 0 | rate:

| |

|

New Gerard tasks? | |

| ID: 56753 | Rating: 0 | rate:

| |

New Gerard tasks? these pop up from time to time. always only a handful of them. they're a rare gem. not enough to feed the masses though. ____________  | |

| ID: 56754 | Rating: 0 | rate:

| |

New Gerard tasks? I got 3 of them this morning (1_3-GERARD_pocket_discovery_...), and after they have waited a few hours in the queue, the server abortet them - "202 (0xca) EXIT_ABORTED_BY_PROJECT" :-) | |

| ID: 56755 | Rating: 0 | rate:

| |

|

I got two of them. They started processing right away. I have GPUGRID set to resource share of 100 and my other GPU project (Einstein) set to 0. So when I get GPUGRID tasks, they take priority over any backup project work already in the queue and begin right away. | |

| ID: 56756 | Rating: 0 | rate:

| |

I got two of them. They started processing right away. I had a GPUGRID task running, so the downloaded tasks were in waiting position. Had I known that they will disappear that soon, I would have interrupted the running task for short time, in order to get at least one of the three newly downloaded tasks started (thus preventing it from being aborted by the server). Well, next time I know :-) | |

| ID: 56757 | Rating: 0 | rate:

| |

I got two of them. They started processing right away. I have GPUGRID set to resource share of 100 and my other GPU project (Einstein) set to 0. So when I get GPUGRID tasks, they take priority over any backup project work already in the queue and begin right away. Thanks! Didn't know I could do that. I had suspended Einstein so it would pick up the GPUGRID work. Now I have Einstein set to 0% and GPUGRID to 100%. | |

| ID: 56758 | Rating: 0 | rate:

| |

... and begin right away. Don't expect them to run instantly. But 'next in queue' when an Einstein task completes is usually good enough. | |

| ID: 56759 | Rating: 0 | rate:

| |

... and begin right away. No problem. The Einstein jobs are taking less than 20 minutes currently. | |

| ID: 56760 | Rating: 0 | rate:

| |

|

Correct me if I am wrong but the [deadline] is the date and time the task has to be started by NOT completed. It is a deadline for the task start to be completed not the start finish. | |

| ID: 56764 | Rating: 0 | rate:

| |

Correct me if I am wrong but the [deadline] is the date and time the task has to be started by NOT completed. It is a deadline for the task start to be completed not the start finish. If the task isn’t completed and returned by the deadline, it gets sent to another host. You can still submit it late, but the project really wants the result before the deadline. ____________  | |

| ID: 56765 | Rating: 0 | rate:

| |

|

хорошо | |

| ID: 56766 | Rating: 0 | rate:

| |

|

добро пожаловать в Gpugrid | |

| ID: 56767 | Rating: 0 | rate:

| |

|

No WUs again? Was there a problem with the longer ones? I have not seen any WUs from GPUgrid for at least 1-2 weeks now. | |

| ID: 56776 | Rating: 0 | rate:

| |

|

Work units are currently so scarce, that the chance to get one of them is like winning the lottery... ;-) | |

| ID: 56777 | Rating: 0 | rate:

| |

|

May be somebody's played the rain dance, and some WUs are flowing just now... | |

| ID: 56778 | Rating: 0 | rate:

| |

|

WOOOOO. got a full tank now. glad to see these long run tasks back again :) | |

| ID: 56779 | Rating: 0 | rate:

| |

|

I got only two each on my hosts. Still glad to have some back if only briefly. | |

| ID: 56780 | Rating: 0 | rate:

| |

|

Work cache is a BOINC feature that was undoubtedly useful in the days that internet access was for many depending on dial-in access. | |

| ID: 56781 | Rating: 0 | rate:

| |

Work cache is a BOINC feature that was undoubtedly useful in the days that internet access was for many depending on dial-in access. this project already limits to 2 per GPU, no matter what size cache limits you have set in BOINC. but with these long running tasks, folks with very old GPUs (think GTX750ti, GT1030, etc) can still struggle to submit even 1 task before the deadline. in these cases it doesn't make sense to cache more than 1 task. personally I only cache 1 task per GPU on these D3RBandit tasks, even on relatively fast GPUs like RTX2070 and RTX2080. I only cache 2 per GPU on my hosts with RTX 2080tis as those can complete 2 tasks within 24hrs, while the others can't quite make the cut (~17hrs on a 2070, ~13hrs on a 2080) ____________  | |

| ID: 56782 | Rating: 0 | rate:

| |

|

Hi, do you know if these work units have the bonus credit for prompt turnaround like the previous batch? I am ready to hammer these babies out as quick as possible...I want that next molecule, dammit! | |

| ID: 56783 | Rating: 0 | rate:

| |

|

I've seen no policy changes posted or changed. So they should still have the early reporting credit bonus as all previous tasks. | |

| ID: 56784 | Rating: 0 | rate:

| |

|

Alain Maes wrote:

I thought the Ampere cards (RTX ...) do not function (yet) with GPUGRID ??? | |

| ID: 56785 | Rating: 0 | rate:

| |

Alain Maes wrote: Ampere is RTX3000 series RTX 2060 is fine with GPUGrid. Just finished all my WU's on my RTX2000 Turing cards with no issues. | |

| ID: 56786 | Rating: 0 | rate:

| |

|

Finally 100 million. Yay!! | |

| ID: 56787 | Rating: 0 | rate:

| |

|

Congratz! Big first step. | |

| ID: 56788 | Rating: 0 | rate:

| |

|

Compared to my old gpu it is not very weak. | |

| ID: 56792 | Rating: 0 | rate:

| |

Alain Maes wrote: anyone any guess as to when GPUGRID will make their code fit for Ampere cards ? | |

| ID: 56793 | Rating: 0 | rate:

| |

Compared to my old gpu it is not very weak. but weak in comparison to other GPUs available. a 1080ti will take 18hrs. and 2080ti takes 10+hrs. so it makes sense that something like a 1650 would take several days. these new tasks are really huge. ____________  | |

| ID: 56794 | Rating: 0 | rate:

| |

Alain Maes wrote: No ETA, and the project admins/devs seem very tight lipped about it. Many have asked about it several times and no response. ____________  | |

| ID: 56795 | Rating: 0 | rate:

| |

... and no response. which is, unfortunately, nothing unusal here :-( | |

| ID: 56796 | Rating: 0 | rate:

| |

|

Written on March 19th 2021, 18:16 UTC: May be somebody's played the rain dance, and some WUs are flowing just now... ...What implies that on Wednesday 24th (five days later) an aftershock wave of overdue tasks might be coming. Have your fishing lines ready! | |

| ID: 56799 | Rating: 0 | rate:

| |

|

Looks like the timeout resends are already starting to flow. | |

| ID: 56800 | Rating: 0 | rate:

| |

|

Yes, I picked up work for all my hosts. | |

| ID: 56801 | Rating: 0 | rate:

| |

|

I have a RTX 2080 Super with a I9-9900K @ 3.60GHz | |

| ID: 56807 | Rating: 0 | rate:

| |

|

You need to regularly ask for work to get any. | |

| ID: 56808 | Rating: 0 | rate:

| |

|

A couple of these ones just popped up on my desktop machine. Haven't seen those before. | |

| ID: 56809 | Rating: 0 | rate:

| |

You need to regularly ask for work to get any. So in other words GPU Grid has gone the way of another GPU project in TX that used Boinc to farm out small stuff and ran the massive projects on their super computer. That was also hit and miss. Well, nice knowing you GPU Grid, time to look for something else now. | |

| ID: 56810 | Rating: 0 | rate:

| |

what other project are you referring to? but, not sure how you've come to that conclusion, or what you consider "large" or "small" projects, is this based on individual task run times? or the duration of WU availability? don't think GPUGRID has a supercomputer. we ARE their supercomputer. it takes time and money to form the data sets necessary to crunch. and it takes time to petition to get the money to enable their research. some projects have huge backers and lots of resources and can seemingly run continuously indefinitely, while others run on a shoestring budget and/or only have data intermittently. This project has always been hit or miss. sometimes with long running tasks, sometimes with very short tasks (like the MDAD series some months ago), sometimes with constant work supply for months, sometimes with short bursts of work with extended dry spells. If you're looking for something with similar research, using GPUs, and an "infinite" supply of work, look into Folding@home. ____________  | |

| ID: 56811 | Rating: 0 | rate:

| |

|

I am curious to hear how long it would take to run 1 of these big tasks on Windows 10 with a RTX 2070? TIA | |

| ID: 56817 | Rating: 0 | rate:

| |

I am curious to hear how long it would take to run 1 of these big tasks on Windows 10 with a RTX 2070? TIA You have available this rod4x4 comprehensive runtime summary, in which RTX 2070 is included. ADRIA D3RBandit task runtime summary | |

| ID: 56818 | Rating: 0 | rate:

| |

|

I just wish that my Nvidia RTX 2080 graphics card and my Nvidia RTX 1080 in another PC, would get something to do, It seams everything has stalled and i receive no tasks .. | |

| ID: 56819 | Rating: 0 | rate:

| |

I just wish that my Nvidia RTX 2080 graphics card and my Nvidia RTX 1080 in another PC, would get something to do, It seams everything has stalled and i receive no tasks .. the tasks are running thin the past few days. the project seems to not be distributing new work right now. only expect a random resend every now and then until the project starts creating tasks again. ____________  | |

| ID: 56820 | Rating: 0 | rate:

| |

... the tasks are running thin the past few days. let's face it: NOT the past few days, rather the past few weeks :-( And it's too bad that we are not given any even tentative information as to when we can expect new tasks. | |

| ID: 56821 | Rating: 0 | rate:

| |

|

i had a rather consistent run of work until only a few days ago. | |

| ID: 56822 | Rating: 0 | rate:

| |

|

Current wasn't a working week at spanish universities. | |

| ID: 56823 | Rating: 0 | rate:

| |

|

What's this? | |

| ID: 56828 | Rating: 0 | rate:

| |

|

13hrs? wow! it doesn't look like you've submitted that task yet though. your host details says still in progress. | |

| ID: 56829 | Rating: 0 | rate:

| |

|

Now that you mention it Ian, I found it running in tandem with a FAHcore task when it was already 50% finished. I paused the F@H task and the rest went much faster. I see the CPU time was around half an hour less, showing that this machine was a bit overloaded by me running Winamp with MilkDrop visualization in the desktop mode. Meanwhile I was web-browsing, mail, etc. | |

| ID: 56830 | Rating: 0 | rate:

| |

|

I wish they would leak out a few more of these one-offs. My RAC is plummeting. | |

| ID: 56831 | Rating: 0 | rate:

| |

I wish they would leak out a few more of these one-offs. My RAC is plummeting.+1 | |

| ID: 56832 | Rating: 0 | rate:

| |

|

Re: D3RBanditTest | |

| ID: 56834 | Rating: 0 | rate:

| |

|

Any news on WU's coming up? | |

| ID: 56835 | Rating: 0 | rate:

| |

|

Got a couple of the crypticscout_pocket_discovery work units last night. | |

| ID: 56836 | Rating: 0 | rate:

| |

Keith by chance was a resend? Reason I ask is because there is only currently 16 in progress | |

| ID: 56838 | Rating: 0 | rate:

| |

Nope, initial two task replications. https://www.gpugrid.net/workunit.php?wuid=27048098 https://www.gpugrid.net/workunit.php?wuid=27048097 | |

| ID: 56839 | Rating: 0 | rate:

| |

|

Hello: I have a question about the task of GPUGRID. I have tried it 2 times and the same problem occurs every time. I can log in normally, but I can't get any tasks! How can I troubleshoot this problem! | |

| ID: 56840 | Rating: 0 | rate:

| |

|

There is no work being produced, or very, very little actually. | |

| ID: 56841 | Rating: 0 | rate:

| |

There is no work being produced, or very, very little actually. This is all quite unfortunate. I was a long time believer in gpugrid going back to when they could run on playstations. I recall they "owned" the ps2grid name at one time. WCG quit developing for GPUs some time ago but I switched to them as soon as I found out they were developing again. Their GPU tasks are all beta so one has to sign up for the beta program. Some statistics I put together there https://www.worldcommunitygrid.org/forums/wcg/viewthread_thread,43367 | |

| ID: 56842 | Rating: 0 | rate:

| |

|

They have left Beta and now in production but low amount work units (1700 work units on average every 30 minutes). | |

| ID: 56843 | Rating: 0 | rate:

| |

unfortunately, no indication at this time :-( Also, so far no progress what concerns Ampere cards :-( While Ampere works on WCG and F&H | |

| ID: 56844 | Rating: 0 | rate:

| |

|

I have caught a few World Community Grid GPU tasks. IIRC, they were OpenCL. They ran concurrently with FahCore CUDA tasks without too much slowdown. I also noted that some WCG tasks ran on my Intel GPUs. Intel GPUs are being used also by Einstein@home in OpenCL. | |

| ID: 56845 | Rating: 0 | rate:

| |

I don't see any thing suggesting this project is nearing the end to "completing the crunching stage of the project" however I do believe they are entering the open quote home "stretch" on "DS3" to bring this thread back on topic I have not received any D3RBanditTest tasks (am aware they are hard to come by) | |

| ID: 56846 | Rating: 0 | rate:

| |

|

Wow - I caught a Bandit! task 32565770: I happened to ask 1 second after it was created. | |

| ID: 56849 | Rating: 0 | rate:

| |

but weak in comparison to other GPUs available. a 1080ti will take 18hrs. and 2080ti takes 10+hrs. so it makes sense that something like a 1650 would take several days. these new tasks are really huge. Several days with a GTX 1650 at stock speed? (I always run at stock speed with my GPU when it's a boinc-usage.) My RTX 2060 died and it was the best I could get so far (and actually it runs pretty cool on tasks where my old (and probably deffective) RTX was 15°C higher at least. Also, I know some projects are not Ampere-ready yet so I'm sticking at Turing for now. So question is now... does this long new tasks on GPUGRID have checkpoint or does it save the work already done when the task is stopped? I'd like at least try (and complete) one of these tasks. But if it's for running a GPU-task for 10 hours and losing the work at the end of the day, I think we'll agree it's not worth it in this precise condition. Meanwhile... yeah WCG has started to drop GPU tasks, working with Ampere architecture as well as previous architectures (I asked on their forum). But very few and very fast tasks. | |

| ID: 56850 | Rating: 0 | rate:

| |

|

The tasks checkpoint and can be stopped and restarted . . . . as long as the task is restarted on the same type of device. | |

| ID: 56852 | Rating: 0 | rate:

| |

The tasks checkpoint and can be stopped and restarted . . . . as long as the task is restarted on the same type of device. Thank you for the answers concerning the checkpoints. Good to know as well that you cannot change the device is "linked" a task once it has begun to work on this specific task. Waiting for tasks now but I'm not in a hurry, the GPU's working on another task for now (more for a test about my hardware but I still have 10 hours to run at least). | |

| ID: 56853 | Rating: 0 | rate:

| |

|

I am getting WorldCommunityGridi OpenPandemics-COVID-19 GPU tasks which take about three to four minutes on my GTX 1060 board. | |

| ID: 56854 | Rating: 0 | rate:

| |

|

The new beta "stress" WCG OPNG tasks are much larger and taking up to 15 minutes to compute on a RTX 2080. | |

| ID: 56855 | Rating: 0 | rate:

| |

|

The OPNG vary in length, and are not marked beta anymore, for whatever that is worth. So you have to average the times. | |

| ID: 56857 | Rating: 0 | rate:

| |

|

Sorry, double post. | |

| ID: 56858 | Rating: 0 | rate:

| |

|

The latest OPNG tasks took about 20 minutes on my GTX 1060. In the first 7 minutes the GPU was not engaged. | |

| ID: 56859 | Rating: 0 | rate:

| |

|

Glad to see a large batch of these new units coming out. should keep us well fed for another few weeks. | |

| ID: 56885 | Rating: 0 | rate:

| |

Glad to see a large batch of these new units coming out. I suspect they still won't run on Ampere cards ? | |

| ID: 56886 | Rating: 0 | rate:

| |

|

nope. still CUDA 10.0/10.1 = no Ampere support. still waiting for that CUDA 11.1+ app. | |

| ID: 56887 | Rating: 0 | rate:

| |

unless you see "cuda111" or "cuda112" listed, don't count on Ampere support thanks for the information; what a pitty :-( | |

| ID: 56888 | Rating: 0 | rate:

| |

|

Does anyone know by any chance, what the current batch of tasks (D3RBandit) are all about? What do we compute? | |

| ID: 56892 | Rating: 0 | rate:

| |

|

Lately I've caught lots of WUs that have bounced off one or more hosts running Ampere GPUs. There is much untapped resource, even with the new anti-mining feature. | |

| ID: 56893 | Rating: 0 | rate:

| |

Lately I've caught lots of WUs that have bounced off one or more hosts running Ampere GPUs. There is much untapped resource, even with the new anti-mining feature. I gave up waiting for Ampere support here. It was clear the project devs have it at lowest priority (every time I asked about it, I was ignored, even when they were responsive about any other topic). I traded my 3070 for a 2080ti and moved on. ____________  | |

| ID: 56894 | Rating: 0 | rate:

| |

|

Ian (and Bozz4science), something else we're apparently not allowed to ask is what it is we are crunching. | |

| ID: 56899 | Rating: 0 | rate:

| |

|

Yeah, sadly that is very disappointing to say the least. Information policy here is annoying sometimes due to its non-existence. I am just a small fish with my little machine, but it would certainly drive me nuts not getting a single statement from the project team with respect to planned Ampere support. | |

| ID: 56903 | Rating: 0 | rate:

| |

Yeah, sadly that is very disappointing to say the least. Information policy here is annoying sometimes due to its non-existence. I am just a small fish with my little machine, but it would certainly drive me nuts not getting a single statement from the project team with respect to planned Ampere support. + 1 | |

| ID: 56905 | Rating: 0 | rate:

| |

Yeah, sadly that is very disappointing to say the least. Information policy here is annoying sometimes due to its non-existence. I am just a small fish with my little machine, but it would certainly drive me nuts not getting a single statement from the project team with respect to planned Ampere support. +1 (adding "Please") <--| ->-----------------------------| | |

| ID: 56907 | Rating: 0 | rate:

| |

|

over 4000 tasks ready to send. | |

| ID: 56908 | Rating: 0 | rate:

| |

|

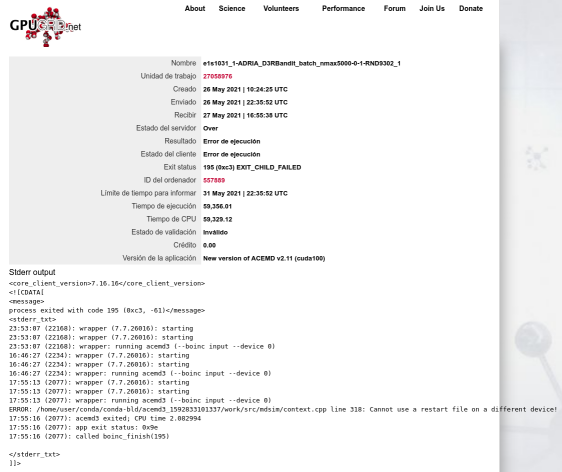

Error on D3RBandit task below wasn't due to a "restart on a different device" known problem. | |

| ID: 56909 | Rating: 0 | rate:

| |

|

I've seen this happen on occasion too. Definitely have to be more careful with these long running D3RBandit tasks, or risk throwing away a lot of computation time. | |

| ID: 56910 | Rating: 0 | rate:

| |

|

These current WUs perform worse than anything I've ever seen from GG. Far more failures than even WUs that run. | |

| ID: 56914 | Rating: 0 | rate:

| |

These current WUs perform worse than anything I've ever seen from GG. Far more failures than even WUs that run. sounds like something wrong on your end. I've had very few failures. I have only 2 legitimate computation errors with the latest D3RBandit series, from any of my systems, of the hundreds of tasks that I've processed in the past few weeks. that's not including things like me aborting them for whatever reason, or the server cancelling a resend, or a download error. one of the failures was a bad WU (all hosts failed) the other looks like it was some random problem on my host, as it was processed by another host eventually if you un-hide your hosts, I might be able to see what the problem is. ____________  | |

| ID: 56915 | Rating: 0 | rate:

| |

These current WUs perform worse than anything I've ever seen from GG. Far more failures than even WUs that run. That would have an explanation if you had upgraded your hosts to Ampere GPUs. That series of graphics cards are not supported by current Gpugrid applications, and every tasks will fail immediately. | |

| ID: 56918 | Rating: 0 | rate:

| |

|

Those Anaconda Python 3 Environment tasks admittedly had a very high failure rate but were part of a test batch though without the intent to compute on actual data. D3RBandit tasks are finishing just fine except for the known suspend/resume issue. | |

| ID: 56919 | Rating: 0 | rate:

| |